Foreboding headlines describe a dystopian future where ChatGPT replaces human jobs, entices students into pervasive cheating, and finally offers a strong competitor to Google search. There is some truth and a lot of overreaction in these headlines. Even Last Week Tonight with John Oliver did a hilariously accurate segment on it. What do they all have in common? There are ethical issues at stake in all of them.

Issues of ethics and AI raise a host of interesting questions.

Do technologies like ChatGPT perpetuate bias and inequality based on design and deployment?

What is the acceptable use of AI, and what are a user’s experiences and responsibilities of use? Does it matter if it’s an institution and not a person? Lastly, if rational decision-making (using information to make decisions) defines being human, or at least what makes them moral, then do we need to rethink who or what is human? Should we rethink what features make humans morally different from other animals and machines? These questions fall into a few categories: ethical AI, user ethics, and how technological innovation changes ethics.

Let’s consider a student at Garrett, where the Stead Center is located. You’re probably thinking the example will be about plagiarism and writing papers, but No! That’s not the example! Educators generally agree that instructors need to be more creative and engage ChatGPT rather than try to prevent students from using it. However, how might a pastor use an AI like ChatGPT? Should they use it to write sermons or prayers?

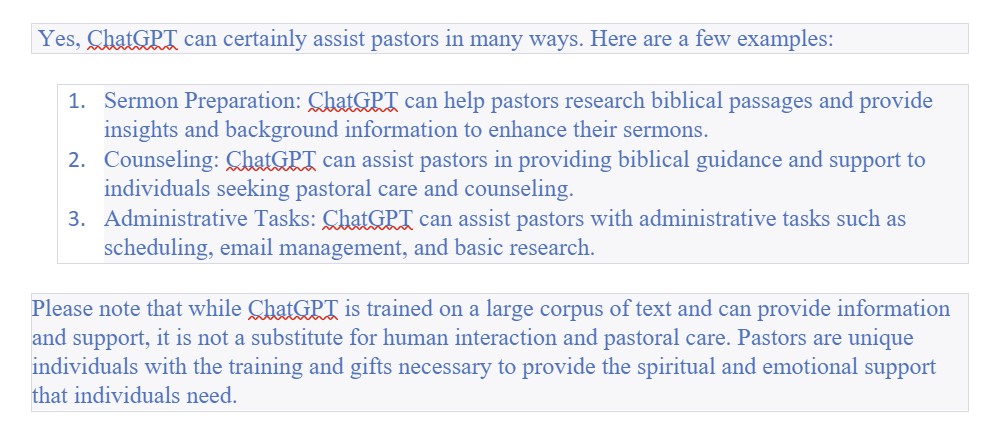

Rather than me telling you how this type of AI might help pastors and what its limits might be, why not ask the AI? Here is what ChatGPT said:

Prompt: Can ChatGPT help pastors?

ChatGPT response:

Please note that while ChatGPT is trained on a large corpus of text and can provide information and support, it is not a substitute for human interaction and pastoral care. Pastors are unique individuals with the training and gifts necessary to provide the spiritual and emotional support that individuals need.

Does the fluid, conversational written response surprise you? How about the knowledge of three primary aspects of a pastor’s job? What about the caveat about spiritual care? I was surprised! This is the best AI with which I have interacted. It runs on a neural network using deep learning and natural language processing. It’s clearly better than Siri, Alexa, or other smart assistants, but is it ethical?

How does the design of ChatGPT lead to ethical outcomes and resist biases?

Well, as a seminary professor, I’m glad to see it did not say, ChatGPT can write your sermons for you, which it can. Instead, it focused on how it could assist in, not replace, the main duties of pastoring, and it even added a caution that it does “not substitute for human interaction and pastoral care.” It affirmed the unique training and gifts of pastors. In other prompt responses, ChatGPT likewise noted that it may not be accurate and can only respond based on the information available. It seems the AI is very fallible, just like humans, and the designers built in safeguards to acknowledge limitations. This is an example of ethical design.

The platform promotes ethical use when it reminds users that it employs another AI tool for content moderation “to warn or block certain types of unsafe content.” Of course, there are differing opinions about what is “unsafe content”, and things will slip through. But the designers are promoting ethical AI and ethical use of the AI. They seek user feedback to increase accuracy and even monitor user prompts that might input “unsafe content.” User interaction with the AI shapes the system and how it learns. Users have other ethical responsibilities as well. Just like if I read a book and wanted to share a new idea I learned in a sermon, I should tell the congregation that ChatGPT helped me with my research or writing. It’s dishonest to steal ideas and present them as ours, even if the AI can’t “catch you.” That is to say, human interaction with AI impacts moral formation.

Technology, like the humans who design it, reinforces social biases, as hs been well-documented related to issues of race, gender, and disabilities. In a recent conversation with an Asian American pastor about ChatGPT, I noted that these systems can make it seem like they are not influenced by biases of race and gender. To which they replied, “Yeah, I was thinking the other night how the ChatGPT responses sound like my white friends, albeit smart white friends.” The type of English, and it only speaks English, is based on a specific linguistic preference system that favors a white American, Eurocentric style of writing. When it responded to Christian questions, ChatGPT listed responses related to scripture or the bible first, which makes assumptions about Christian sources of authority and demotes things like tradition, doctrine, or religious experience. These might feel subtle, but they are examples of the ways users could be shaped by the AI, and ways users might shift the AI as we give it feedback.

Most everyday ethical approaches to technology rely on a rules-based approach: do’s and don’ts. These lists are usually out of date by the time we make them given the rapid shifts in technology.

As demonstrated by our example, we can’t say whether ChatGPT is bad or good; whether it should or shouldn’t be used by pastors or seminary students. What we can probably say is something about how it should be used and when we should tell people we have been assisted by it. It will require a new ethical framework that should be flexible and adaptive as I’ve described in Christian Ethics for a Digital Society. We need to consider how Christian values like honesty, justice, kindness, compassion, respect, generosity, and love direct our actions in a specific context and for a specific community. This type of ethical discernment, which takes time and critical engagement, means faith communities need to be proactive about shared conversations related to digital ethics.

To hear more from Dr. Ott on ChatGPT and AI ethics, listen to her recent podcast appearance on “The Uncovered Dish” a Christian leadership podcast of the greater New Jersey UMC.